Hello everyone and welcome to the second tutorial of the OpenGL4 tutorial series! As promised, this one is going to be really, really long, as this tutorial covers one of the most fundamental concepts of rendering in the modern OpenGL, namely Shaders, Shader Programs, Vertex Buffer Objects and Vertex Array Objects. So get yourself a cup of coffee or tea, because this one will definitely take some time to go through  !

!

In the good old days, in the 1990s and 2000s, the rendering in OpenGL was pretty straightforward. Like you did not have to care much about how stuff works and you simply called few predefined functions (most notable are glBegin() and glEnd()). The pretty common code you could have seen for rendering in the pre-shaders era could look like this:

glBegin(GL_TRIANGLES);

glColor3ub(255, 0, 0); // Red color

glVertex2f(0.0f, 4.0f);

glColor3ub(0, 255, 0); // Green color

glVertex2f(-2.0f, 0.0f);

glColor3ub(0, 0, 255); // Blue color

glVertex2f(2.0f, 0.0f);

glEnd();On the first sight, it looks nice and easy and readable, so where's the problem? Why did we go from this to some complicated stuff (that you will learn about in this tutorial later)? The reason is very simple - this easy to use implementation has two main drawbacks:

.

.

To fully use the capabilities of the modern GPUs, we simply have to program rendering pipeline ourselves. Shaders are programs, that run on GPU directly, but usually in parallel on multiple involved objects (vertices, fragments etc.). If you're new to OpenGL and rendering stuff, it might sound to you like shaders would be something, that create shades. But actually, shaders are arbitrary programs, that simply process vertices and data we send to it, and in the end, they produce the final image. At the moment of writing the article (september 2018), there are following shader types:

In this tutorial, we care about Vertex and Fragment shader only (other types will be covered in future tutorials). So let's find out right now, how you can use those shaders!

To keep everything systematic, we will have to create a shader class, that will handle shader loading / using and deleting. We will simply wrap those low level OpenGL calls to a higher level class:

class Shader

{

public:

bool loadShaderFromFile(const std::string& fileName, GLenum shaderType);

bool isLoaded() const;

void deleteShader();

GLuint getShaderID() const;

GLenum getShaderType() const;

private:

bool getLinesFromFile(const std::string& fileName, std::vector& result, bool isReadingIncludedFile = false);

GLuint _shaderID;

GLenum _shaderType;

bool _isLoaded = false;

};

As you can see, this class exposes several methods, some of which pretty self-explanatory. I will briefly explain what the methods do:

Now you should have a brief overhead, what every function of this class does. But it's still important to understand, how the shaders are loaded, so let me guide you through the most important function - loadShaderFromFile():

bool Shader::loadShaderFromFile(const std::string& fileName, GLenum shaderType)

{

std::vector fileLines;

if(!getLinesFromFile(fileName, fileLines))

return false;

const char** sProgram = new const char*[fileLines.size()];

for (int i = 0; i < int(fileLines.size()); i++)

sProgram[i] = fileLines[i].c_str();

_shaderID = glCreateShader(shaderType);

glShaderSource(_shaderID, (GLsizei)fileLines.size(), sProgram, NULL);

glCompileShader(_shaderID);

delete[] sProgram;

int compilationStatus;

glGetShaderiv(_shaderID, GL_COMPILE_STATUS, &compilationStatus);

if(compilationStatus == GL_FALSE)

{

char infoLogBuffer[2048];

int logLength;

glGetShaderInfoLog(_shaderID, 2048, &logLength, infoLogBuffer);

std::cout << "Error! Shader file " << fileName << " wasn't compiled! The compiler returned:" << std::endl << std::endl << infoLogBuffer << std::endl;

return false;

}

_shaderType = shaderType;

_isLoaded = true;

return true;

}

The whole function starts by reading whole text as several std::strings. This is done by calling the magical function getLinesFromFile, which you can examine on your own - long story short, it gets all the lines of text from file  . If it fails for whatever reasons, we simply return from function prematurely. Once it's done, we have to convert that std::vector to a const char** sProgram C-style variable, because that is something that OpenGL requires in latter function calls (OpenGL is pretty low-level, so that's why we have to provide data in a low-level manner). Whatever, let's just go with it and convert that std::vector<std::string> to the C-style array of C-style strings.

. If it fails for whatever reasons, we simply return from function prematurely. Once it's done, we have to convert that std::vector to a const char** sProgram C-style variable, because that is something that OpenGL requires in latter function calls (OpenGL is pretty low-level, so that's why we have to provide data in a low-level manner). Whatever, let's just go with it and convert that std::vector<std::string> to the C-style array of C-style strings.

Now we can start with shader creating. The function glCreateShader(shaderType) creates a new shader of specified type within OpenGL context. We get an ID, that we will use in latter function calls related to this shader. Then we must provide source code of the shader with glShaderSource(_shaderID, (GLsizei)fileLines.size(), sProgram, nullptr) function. It takes shader ID (that we have from previous glCreateShader call), number of lines, the lines itself as double pointer to char (pointer to pointers of char, essentially pointer to strings or C-style array of strings). The last parameter is nullptr practically always - if your strings weren't null terminated (which I have really never seen to be honest), you provide an array of string lengths. But because we don't provide it, OpenGL supposes that our strings are 0-terminated (just like normal C-style strings).

We can compile the shader now by calling glCompileShader(_shaderID). Afterwards, we can (and we should!) delete the array of C-strings created, so that we have no memory leaks! And we can proceed with asking about the compilation status - we ask for it by calling glGetShaderiv(_shaderID, GL_COMPILE_STATUS, &compilationStatus). If compilation status does not report success (it equals FALSE), we examine the problem further by extracting and outputting compilation log to the console! Information found there may help you to debug your shader code  .

.

When we succesfully perform everything, we are done with a single shader loading! That's nice, but we are still far from rendering anything  .

.

In order to render something, we have to use Shader Program, not shader alone. But what is Shader Program? It's actually not very difficult to understand - it's just several shaders combined, that in the end perform rendering  . To draw something reasonable, we definitely need at least vertex and fragment shader and put them together into a shader program. The purpose of shader programs is, that you can reuse different vertex / fragment / geometry shaders, but combine them differently (for example, you can create shader with the same vertex processing, but different fragment processing - one with shadows, one without it). So that's the point of them and below you can see a ShaderProgram class definition:

. To draw something reasonable, we definitely need at least vertex and fragment shader and put them together into a shader program. The purpose of shader programs is, that you can reuse different vertex / fragment / geometry shaders, but combine them differently (for example, you can create shader with the same vertex processing, but different fragment processing - one with shadows, one without it). So that's the point of them and below you can see a ShaderProgram class definition:

class ShaderProgram

{

public:

void createProgram();

bool addShaderToProgram(const Shader& shader);

bool linkProgram();

void useProgram();

void deleteProgram();

GLuint getShaderProgramID() const;

Uniform& operator[](const std::string& varName);

void setModelAndNormalMatrix(const std::string& modelMatrixName, const std::string& normalMatrixName, const glm::mat4& modelMatrix);

private:

Uniform& getUniform(const std::string& varName);

GLuint _shaderProgramID;

bool _isLinked = false;

std::map _uniforms;

};

What is the workflow of using this class? First of all, you have to create shader program by calling the createShaderProgram method. This calls OpenGL function glCreateShaderProgram(), which as all related OpenGL create methods returns an ID of created shader program. After that, we can start adding SHADERS to our SHADER PROGRAM. Because we already have our high-level class Shader, we can directly add a compiled shader to our shader program by calling addShader(shaderID) method. Internally, it calls OpenGL function glAttachShader(shaderID), that requires shader ID. In our Shader class, we retrieve shader ID by calling getShaderID().

When we're done adding shaders to our shader program, we can finally LINK it. Here in OpenGL it's really a similar concept, as you have in C++ files - you get a bunch of compiled .obj files, that you link together to get final executable. Shaders that we have added to our program are like obj files and shader program is the final executable  . Our high level class has a function linkProgram(), that does exactly that and internally, it calls OpenGL counterpart glLinkProgram(shaderProgramID). When we successfully link it, we can finally use the program for rendering! The function in our class, that does exactly that is called use() and it calls OpenGL counterpart glUseProgram(). Now you can have several OpenGL shader programs prepared and just change them during rendering. There can only be one shader program active always! So to render things with two different shader programs, you have to first render with first shader program, then use another program and render another set of objects.

. Our high level class has a function linkProgram(), that does exactly that and internally, it calls OpenGL counterpart glLinkProgram(shaderProgramID). When we successfully link it, we can finally use the program for rendering! The function in our class, that does exactly that is called use() and it calls OpenGL counterpart glUseProgram(). Now you can have several OpenGL shader programs prepared and just change them during rendering. There can only be one shader program active always! So to render things with two different shader programs, you have to first render with first shader program, then use another program and render another set of objects.

Now that the important terms are clear, we can move on to another very important feature in OpenGL: Vertex Buffer Objects!

As mentioned in the first paragraph, rendering stuff in old OpenGL was really slow because of too many function calls that were sending possibly same numbers over and over again. Of course this cannot be fast! That's why Vertex Buffer Objects (VBO) have been introduced, and it was pretty long time ago - already in OpenGL 1.4 (which has been released in the year 2002, the VBO Whitepaper is here). The idea is super simple - instead of transferring those data every single frame, why not just store them in GPU memory once and then reuse it?

And that is exactly what VBOs are all about. You just store your rendering data (models / worlds / whatever) and you now render them A LOT faster! The data you upload can be re-used every frame and because it resides in GPU memory, it really has the lowest latency possible  ! The array is just a byte buffer, the way you interpret those bytes is up to you (we will get to that soon). Now, let's wrap low-level VBO to higher level VertexBufferObject class:

! The array is just a byte buffer, the way you interpret those bytes is up to you (we will get to that soon). Now, let's wrap low-level VBO to higher level VertexBufferObject class:

class VertexBufferObject

{

public:

void createVBO(uint32_t reserveSizeBytes = 0);

void bindVBO(GLenum bufferType = GL_ARRAY_BUFFER);

void addRawData(void* ptrData, uint32_t dataSizeBytes, int repeat = 1);

template

void addData(const T& obj, int repeat = 1)

{

addRawData(&obj, sizeof(T), repeat);

}

void* getRawDataPointer();

void uploadDataToGPU(GLenum usageHint);

void* mapBufferToMemory(GLenum usageHint);

void* mapSubBufferToMemory(GLenum usageHint, uint32_t offset, uint32_t length);

void unmapBuffer();

GLuint getBufferID();

uint32_t getBufferSize();

void deleteVBO();

private:

GLuint _bufferID = 0;

int _bufferType;

uint32_t _uploadedDataSize;

std::vector _rawData;

bool _isDataUploaded = false;

}; The idea behind this class is, that you can comfortably add the data to the buffer and then upload them to GPU all at once. The implementation behind it is done using std::vector<unsigned char>, which is basically dynamic byte array and everytime you add the data to the vector, you just add raw bytes. Let's go through its methods:

.

.It's worth mentioning, what is the intended workflow of this class (and also how OpenGL creators meant it). First of all, you create the VBO, you bind it, add some data to it and then upload them to GPU. Then your buffer is ready to be used! Example code will be discussed in the end of this article, when we understand the last important concept of this tutorial - Vertex Array Object.

I know it's been already too much in this article, but hold on! This one is really last and then we get to the actual rendering stuff finally  ! As mentioned before, VBOs can contain arbitrary data. But how do we tell OpenGL how to interpret them, what to do with those raw bytes? That's where Vertex Array Object (VAO) comes! With them, we can tell OpenGL (or our shaders), how are the data in VBOs stored

! As mentioned before, VBOs can contain arbitrary data. But how do we tell OpenGL how to interpret them, what to do with those raw bytes? That's where Vertex Array Object (VAO) comes! With them, we can tell OpenGL (or our shaders), how are the data in VBOs stored  . They simply store which buffers contain which data and it stores all those settings. So one can simply create VAO just once, set everything with VBOs up and then just reuse VAOs to do different renderings!

. They simply store which buffers contain which data and it stores all those settings. So one can simply create VAO just once, set everything with VBOs up and then just reuse VAOs to do different renderings!

Similar to VBO, VAO is created using glGenVertexArrays method. Once it is created, in order to work with it, we have to bind it using glBindVertexArray(vaoID) method, that takes generated VAO ID as a parameter. Now, we're ready to work with it.

In the old OpenGL, you've had things like vertex position, texture coordinate, normal or color. They are just 4 examples of generalized version called vertex attributes. With OpenGL 4, you define arbitrary vertex attributes, where each of this attributes has its index (location). It is really up to our imagination, what attributes we use - we can even have - I don't know - lucky number assigned to every vertex  . To set up one vertex attribute, we have to call method glEnableVertexAttribArray(index) and subsequently set the vertex attribute data format using glVertexAttribPointer(GLuint index, GLint size, GLenum type, GLboolean normalized, GLsizei stride, const GLvoid * pointer). It looks really complicated at the moment, but worry not - explanation is in the section below, with example code from this tutorial

. To set up one vertex attribute, we have to call method glEnableVertexAttribArray(index) and subsequently set the vertex attribute data format using glVertexAttribPointer(GLuint index, GLint size, GLenum type, GLboolean normalized, GLsizei stride, const GLvoid * pointer). It looks really complicated at the moment, but worry not - explanation is in the section below, with example code from this tutorial  . It's just easier to explain with working code rather than just explaining stuff in theory, without example.

. It's just easier to explain with working code rather than just explaining stuff in theory, without example.

Let's now put all the things we've learned today - Shader, Shader Program, Vertex Buffer Object and Vertex Array Object into working code. Below, you can see the initializeScene() function of this tutorial:

void OpenGLWindow::initializeScene()

{

glClearColor(0.0f, 0.5f, 1.0f, 1.0f);

vertexShader.loadShaderFromFile("data/shaders/tut002/shader.vert", GL_VERTEX_SHADER);

fragmentShader.loadShaderFromFile("data/shaders/tut002/shader.frag", GL_FRAGMENT_SHADER);

if (!vertexShader.isLoaded() || !fragmentShader.isLoaded())

{

closeWindow(true);

return;

}

mainProgram.createProgram();

mainProgram.addShaderToProgram(vertexShader);

mainProgram.addShaderToProgram(fragmentShader);

if (!mainProgram.linkProgram())

{

closeWindow(true);

return;

}

glGenVertexArrays(1, &mainVAO); // Creates one Vertex Array Object

glBindVertexArray(mainVAO);

glm::vec3 vTriangle[] = { glm::vec3(-0.4f, 0.1f, 0.0f), glm::vec3(0.4f, 0.1f, 0.0f), glm::vec3(0.0f, 0.7f, 0.0f) };

glm::vec3 vQuad[] = { glm::vec3(-0.2f, -0.1f, 0.0f), glm::vec3(-0.2f, -0.6f, 0.0f), glm::vec3(0.2f, -0.1f, 0.0f), glm::vec3(0.2f, -0.6f, 0.0f) };

shapesVBO.createVBO();

shapesVBO.bindVBO();

shapesVBO.addData(vTriangle);

shapesVBO.addData(vQuad);

shapesVBO.uploadDataToGPU(GL_STATIC_DRAW);

glEnableVertexAttribArray(0);

glVertexAttribPointer(0, 3, GL_FLOAT, GL_FALSE, sizeof(glm::vec3), 0);

}Let's just follow code now. We first load both shaders - vertex and fragment from the file (below, we will discuss shaders as well). If any of them has not been loaded, we quit the application. Afterwards, we create shader program and add the vertex and fragment shader to it. If the linking of the two fails, we quit application as well.

Now that the shader program is ready, we shall create one VAO and one VBO. You may see, that I have created two arrays of vertices - one is called vTriangle and one is called vQuad. vTriangle contains three vertices, that will render a triangle and vQuad contains four vertices, that will render quad using triangle strip (which creates two triangles actually). We add those two arrays to our VBO by calling shapesVBO.addData(). You can see, that second parameter is the sizeof(array), which gives us exactly size of those data in bytes! (it's actually sizeof(glm::vec3) times length of that array). At the end, we upload the data to GPU using uploadDataToGPU function.

WARNING: This sizeof thingy works only with statically defined array, with dynamically allocated arrays using pointers this won't work and you get size of pointer instead!

All that remains now is to tell OpenGL, what those data mean (remember, it's always just bytes for OpenGL). First of all, we enable one vertex attribute by calling glEnableVertexAttribArray(0). We can use some other numbers (up to maximum number of attributes, which can also be queried from OpenGL), but we systematically start from 0, why would we start with number 5  . That attribute with ID 0 will be position of our vertices and so shall we treat this attribute in the shader code. Now the complicated call to glVertexAttribPointer follows. First parameter is vertex attribute index (it's 0). Second is number of components per attribute. In our case, we are using 3 floats X, Y, Z to define one vertex position. Third parameter is data type and it's float in our case. Fourth parameter is whether data should be normalized when we access them - honestly, in my whole life I have never set this value to true, maybe there is a use case for it, but leave it false for now

. That attribute with ID 0 will be position of our vertices and so shall we treat this attribute in the shader code. Now the complicated call to glVertexAttribPointer follows. First parameter is vertex attribute index (it's 0). Second is number of components per attribute. In our case, we are using 3 floats X, Y, Z to define one vertex position. Third parameter is data type and it's float in our case. Fourth parameter is whether data should be normalized when we access them - honestly, in my whole life I have never set this value to true, maybe there is a use case for it, but leave it false for now  . Fifth parameter is stride - byte offset between two consecutive attributes - in our case, it's zero bytes, because the vertex positions are tightly packed in array (there is nothing else between vertex position N and vertex position N+1). And last, sixth parameter is a pointer to the first occurence of this attribute within the VBO data. Vertex positions start at the very beginning of our VBO, so the offset is 0. Be careful, this offset is a void pointer, so we have to explicitly typecast number 0 to (GLvoid*) pointer. Important thing to note is, that glVertexAtribPointer operates on the currently bound VBO!

. Fifth parameter is stride - byte offset between two consecutive attributes - in our case, it's zero bytes, because the vertex positions are tightly packed in array (there is nothing else between vertex position N and vertex position N+1). And last, sixth parameter is a pointer to the first occurence of this attribute within the VBO data. Vertex positions start at the very beginning of our VBO, so the offset is 0. Be careful, this offset is a void pointer, so we have to explicitly typecast number 0 to (GLvoid*) pointer. Important thing to note is, that glVertexAtribPointer operates on the currently bound VBO!

Before getting to the rendering commands, let's have a look at the vertex shader:

#version 440 core

layout(location = 0) in vec3 vertexPosition;

void main()

{

gl_Position = vec4(vertexPosition, 1.0);

}At the top of the file, we are saying which version of GLSL are we using. The important part of this shader is the layout(location = 0) line. With that line, we're saying, that input data to vertex shader with ID 0 (vertex attribute ID) represent vec3, which is three components vector and it is the position of vertex. Then you can see void main() method, just like in C program. This one gets called for every vertex that we process by rendering. We don't do anything fancy here, we just set the built-in variable gl_Position, that should receive homogeneous vertex position (that is the reason we need to add 1.0 at then, it is the w parameter of homogenous coordinate). For more information, refer to official documentation of gl_Position and for some information about what is homogeneous coordinates, I have found this nice article Explaining homogeneous coordinates and projective geometry.

Last thing that we need to have a look at before rendering is the fragment shader:

#version 440 core

layout(location = 0) out vec4 outputColor;

void main()

{

outputColor = vec4(1.0, 1.0, 1.0, 1.0);

}

First line is again the version of GLSL language that we are using. The second line with layout is specifying, what outputs does the fragment shader has. We have only one output from this fragment shader (the white color) and we output it to location 0. Location 0 is what you see on the screen, but you can also output some more stuff, that won't be visible (off-screen). You can even try it yourself, that if you output to location 1 instead, you won't see anything (tested on nVidia hardware at least). And finally, in the main method, we set the output variable to the white color. You can change this number consisting of ones to something else, let's say red color vec4(1.0, 0.0, 0.0, 1.0). The last parameter is alpha, which has usually something to do with transparency and blending. At the moment, just set it to 1.0  .

.

Now we're really ready to render something! So let's have a look at renderScene() function:

void OpenGLWindow::renderScene()

{

glClear(GL_COLOR_BUFFER_BIT | GL_DEPTH_BUFFER_BIT);

mainProgram.useProgram();

glBindVertexArray(mainVAO);

glDrawArrays(GL_TRIANGLES, 0, 3);

glDrawArrays(GL_TRIANGLE_STRIP, 3, 4);

}After clearing the screen, we must say what shader program we want to use for rendering. We also have to bind our VAO, that has all those vertex attributes set up. Then we call glDrawArrays two times. This function takes three parameters - what we are drawing, from which vertex we are drawing, and how much vertices we want to use. In the first case, we are rendering one triangle, that's at the start of our VBO - that's why we call this method with GL_TRIANGLES, starting index 0 and 3 for number of vertices (triangle has 3 vertices).

The second call uses TRIANGLE_STRIP rendering mode - with that you can draw multiple triangles with fewer vertices. Long story short - every three consecutive vertices form a triangle. We want to render our quad, so our rendering mode is TRIANGLE_STRIP, starting from vertex index 3 and using 4 vertices (4 vertices = 2 consecutive triplets 0,1,2 and 1,2,3 = 2 triangles). As homework, you can examine other rendering modes, you can find them in the official documentation of glDrawArrays. To see how triangle strip works, refer to Wikipedia Triangle Strip page, I think it's pretty well explained  .

.

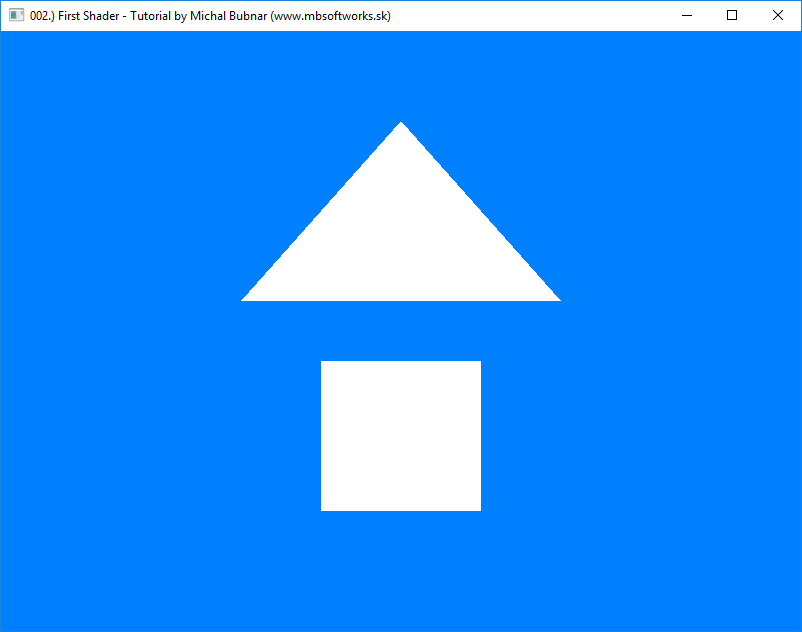

Wow, after all this work, we finally get to this:

I mean - that much code, that much knowledge, and all I get is a triangle and quad? I could draw it in mspaint faster  . Yeah I admit, it is really difficult to set up rendering in modern OpenGL, but from this point on, it won't be that difficult!

. Yeah I admit, it is really difficult to set up rendering in modern OpenGL, but from this point on, it won't be that difficult!

In the next tutorial 003.) Adding Colors we will add colors to our triangle and quad, so that it looks more interesting than just plain white! If you have really read through this article and understood most of it, then you did an impressive job!

Download 118 KB (1130 downloads)

Download 118 KB (1130 downloads)